The information value chain I wrote about a while back, although in need of further refinement, underpins my entire thinking in how I think the business

case for data portability exists.

In this post, I am going to give a brief illustration of how interoperability is a win-win for all involved in the digital media business.

To do this, I am going to explain it using the following companies:

– Amazon (EC2)

– Facebook

– Yahoo! (Flickr)

– Adobe (Photoshop Express)

– Smugmug

– Cooliris

How the world works right now

I’ve listed six different companies, each of which can provide services for your photos. Using a simplistic view of the market, they are all competitors – they ought to be fighting to be the ultimate place where you store your photos. But the reality is, they aren’t.

Our economic system is underpinned by a concept known as “comparative advantage“. It means that even if you are the best at everything, you are better off specialising in one area, and letting another entity perform a function. In world trade, different countries specialise in different industries, because by focusing on what you are uniquely good at and by working with other countries, it actually is a lot more efficient.

Which is why I take a value chain approach when explaining data portability. Different companies and websites, should have different areas of focus – in fact, we all know, one website can’t do everything. Not just because of lack of resources, but the conflict it can create in allocating them. For example, a community site doesn’t want to have to worry about storage costs, because it is better off investing in resources that support its community. Trying to do both may make the community site fail.

How specialisation makes for a win-win

With that theoretical understanding, let’s now look into the companies.

Amazon

They have a service that allows you to store information in the cloud (ie, not on your local computer and permanently accessible via a browser). The economies of scale by the Amazon business allows it to create the most efficient storage system on the web. I’d love to be able to store all my photos here.

Facebook

Most of the people I know in the offline world, are connected to me on Facebook. Its become a useful way for me to share with my friends and family my life, and to stay permanently connected with them. I often get asked my friends to make sure I put my photos on Facebook so they can see them.

Yahoo

Yahoo owns a company called Flickr – which is an amazing community of people passionate about photography. I love being able to tap into that community to share and compare my photos (as well as find other people’s photos to use in my blog posts).

Adobe

Adobe makes the industry standard program for graphic design: Photoshop. When it comes to editing my photos – everything from cropping them, removing red-eye or even converting them into different file formats – I love using the functionality of Photoshop to perform that function. They now offer an online Photoshop, which provides similar functionality that you have on the desktop, in the cloud.

Smugmug

I actually don’t have a Smug mug account, but I’ve always been curious. I’d love to be able to see how my photos look in their interface, and be able to tap into some of the features they have available like printing them in special ways.

Cooliris

Cooliris is a cool web service I’ve only just stumbled on. I’d love be able to plug my photos in the system, and see what cool results get output.

Putting it together

- I store my photos on Amazon, including my massive RAW picture files which most websites can’t read.

- I can pull my photos into Facebook, and tag them how I see fit for my friends.

- I can pull my photos into Flickr, and get access to the unique community competitions, interaction, and feedback I get there.

- With Adobe Photoshop express, I can access my RAW files on Amazon, to create edited versions of my photos based on the feedback in the comments I received on Flickr from people.

- With those edited photos now sitting on Amazon, and with the tags I have on Facebook adding better context to my photos (friends tagging people in them), I pull those photos into Smug mug and create really funky prints to send to my parents.

- Using those same photos I used in Smug Mug, I can use them in Cooliris, and create a funky screensaver for my computer.

As a customer to all these services – that’s awesome. With the same set of photos, I get the benefit of all these services, which uniquely provide something for me.

And as a supplier that is providing these services, I can focus on what I am good at – my comparative advantage – so that I can continue adding value to the people that use my offering.

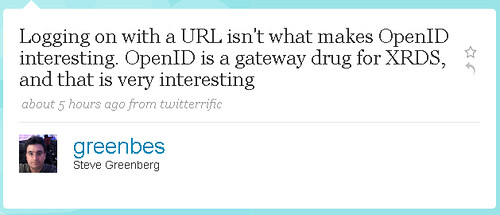

Sounds simple enough, eh? Well the word for that is “interoperability”, and it’s what we are trying to advocate at the DataPortability Project. A world where data does not have borders, and that can be reused again and again. What’s stopping us for having a world like this? Well basically, simplistic thinking that one site should try to do everything rather than focus on what they do best.

Help us change the market’s thinking and demand for data portability.